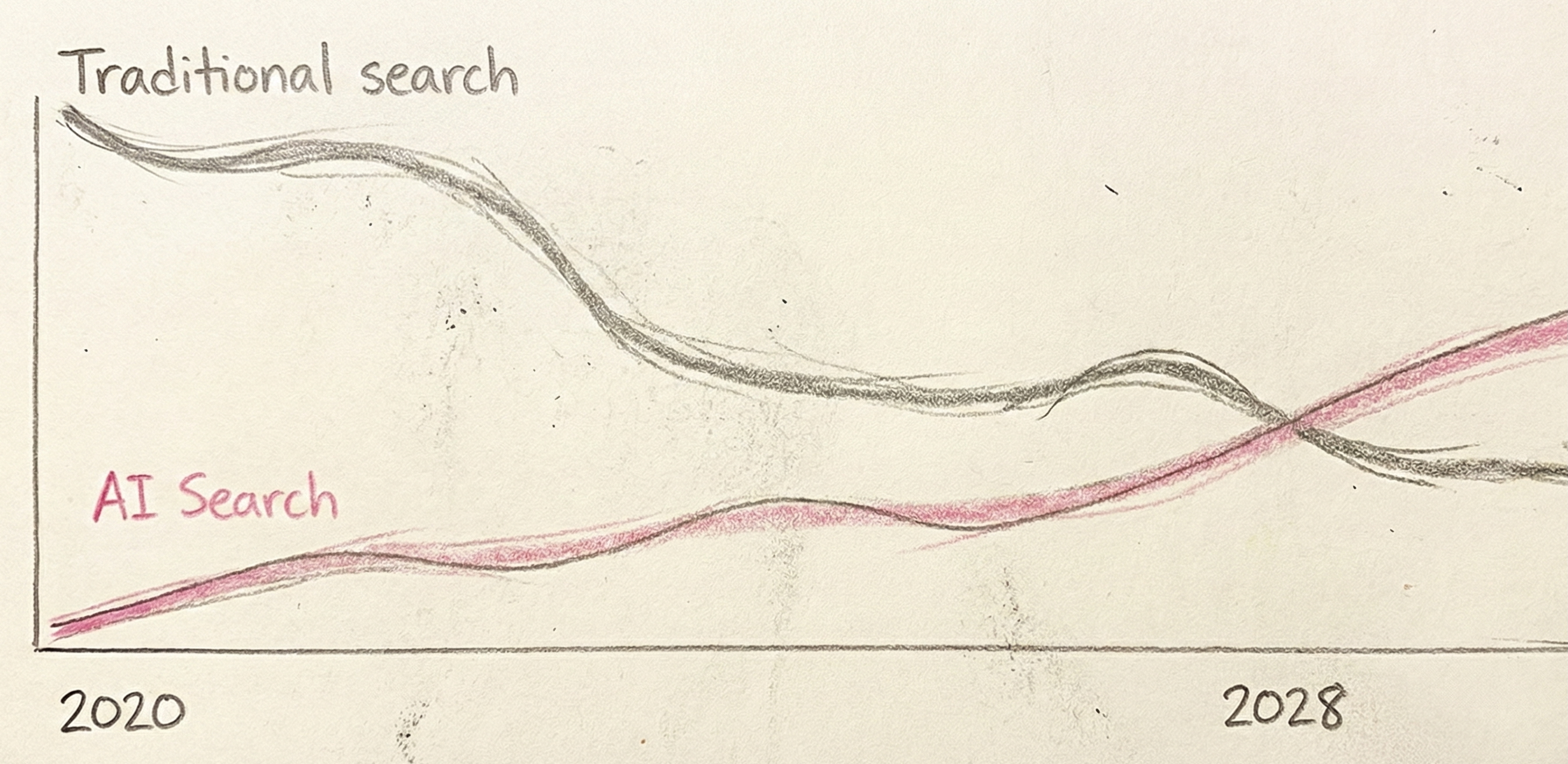

Search changed faster between 2024 and 2026 than it did in the prior decade.

Google AI Overviews now reach more than 2 billion users per month across 200+ countries, according to a January 2026 Guardian investigation. ChatGPT search moved from beta to mainstream usage—OpenAI reported over 1 billion web searches in ChatGPT during a single week in April 2025. Perplexity, Gemini, Claude, and Microsoft Copilot have all become meaningful discovery channels in their own right.

The result is a structural shift in how people find brands.

Traditional search engine optimization (SEO) focused on rankings and clicks. But in AI-driven search experiences, users often get a complete answer without clicking anything. If your brand is not cited, mentioned, or recommended inside the AI-generated response, you may effectively be invisible—regardless of how well you rank in classic search.

Multiple studies now show significant downstream impact. One Authoritas analysis cited by The Guardian found click-through rates dropping by up to 80% for some queries when AI summaries appear. Google has disputed aspects of the methodology, but the directional change is clear: visibility is shifting from links to answers.

This shift has created two closely related disciplines that marketing teams now need to manage: Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO).

This guide covers 11 tools built specifically for that job. Each section explains what a tool tracks, how reporting works, where it fits in your stack, and when it makes sense to use it—so you can match the right tool to your team’s maturity, use case, and budget.

AI answers pull from indexed content, but not all appearances are equal.

A citation means the AI engine links to your source. Users can click through. This is the closest analog to a traditional search result.

A mention means your brand or content is referenced in the answer text without a link. You get visibility, but no direct path back to your site.

A "no link" mention is the same as a mention—your brand appears, but users have no obvious way to reach you. In some interfaces, this is the default.

What drives inclusion varies by engine. A Yext analysis published in October 2025 examined 6.8 million citations across 1.6 million responses. The finding: 86% of AI citations came from sources brands "own or manage"—first-party sites and listings. Gemini citations skewed even more heavily toward brand-owned domains, with 52.15% coming from brand websites.

This means GEO isn't just about earning links from third parties. It's also about ensuring your own properties are structured and authoritative enough to be pulled into answers.

A citation is factual. The AI is saying "here's where I found this."

A recommendation is behavioral. The AI is saying "consider this option."

Both matter, but they require different measurement. A brand can be cited frequently for definitions or statistics but never recommended when users ask "what should I use?" Tracking both tells you whether you're seen as an authority, a solution, or neither.

At minimum, track:

Google AI Overviews aren't an experiment. They're a default.

With 2 billion monthly users across 200+ countries, AI Overviews are now a primary interface for search. Semrush data showed AI Overview presence in their dataset rising from 6.49% in January 2025 to nearly 25% in July 2025, before settling at 15.69% in November 2025. The mix of query types shifted too—informational share declined while commercial and transactional queries increasingly triggered AI Overviews.

When an AI summary answers the question, users often don't scroll. The clickthrough drop—even if debated in magnitude—is real enough that brands are losing traffic without losing rankings.

ChatGPT search launched on October 31, 2024. By December 16, 2024, it expanded to logged-in users. By February 5, 2025, it was available to everyone where ChatGPT operates.

The pace accelerated. By April 2025, OpenAI had added shopping-oriented improvements and reported that users made "more than a billion web searches in ChatGPT" in a single week.

Perplexity, Gemini, and Claude have smaller user bases but growing influence—especially among researchers, analysts, and early adopters who shape purchase decisions.

These aren't replacements for Google. They're parallel discovery paths. If your brand doesn't exist in these answers, you're invisible to a growing segment.

Here's the scenario that keeps marketing leaders awake:

A potential customer asks ChatGPT, "What's the best CRM for mid-market SaaS?" Your competitor appears in the answer. You don't. The user never searches for you because they already have a recommendation.

This isn't hypothetical. It's happening. And unlike SEO, where you could at least see you weren't ranking, many teams don't know they're missing from AI answers until they deliberately check.

The boards that pay attention to market share and brand awareness are starting to ask: "Where do we stand in AI search?"

A complete platform should track:

If a tool only covers AI Overviews, you're missing most of the landscape.

You need to see:

Without competitive context, you're optimizing in the dark.

The difference between useful and useless analytics is explainability.

A basic tool tells you: "You appeared in 12% of tracked prompts."

A useful tool tells you: "You're not appearing for [prompt X] because [competitor Y] is being cited from [source Z] that covers [topic] you don't address."

Explainability turns data into action.

Data without action is a dashboard no one checks after the first week.

Look for:

Enterprise features matter:

AI answers vary by geography, language, and user history. Quality platforms:

Best for: Marketing leaders who need a clear executive view plus an execution plan

HeyAmos monitors AI Search visibility across Google AI Overviews, ChatGPT search, Perplexity, Gemini, Claude, and Copilot. Tracking includes your brand and competitors, with visibility measured at the prompt level.

CMO Report: A summary designed for executive consumption. It includes your rank vs. competitors, the sources driving answers in your category, and prompts to watch. The goal is a report your leadership team will actually read—not a 47-tab spreadsheet.

"Why you're not showing up" diagnostics: HeyAmos doesn't just tell you that you're missing from answers. It explains the gaps—which competitor sources are being cited, what topics you're not covering, where your content falls short.

Data-driven GEO playbook: Prioritized actions based on your specific gaps. The playbook updates as results change, so recommendations stay current.

Performance dashboard: Tracks actions completed vs. visibility impact. This closes the loop between "we did the work" and "it moved the needle."

HeyAmos serves as a primary GEO platform. It's not an add-on to existing SEO tools—it's purpose-built for AI Search visibility with an emphasis on turning findings into action.

Teams use HeyAmos both in-house and with agency support. The platform includes human guidance for teams that want help interpreting findings and prioritizing execution.

Best for: Teams that want SEO + AI Search visibility inside one platform

Semrush One, launched October 29, 2025, unifies traditional SEO and AI Search visibility across Google Search, AI Overviews, ChatGPT, Gemini, and Perplexity. Semrush cites an AI visibility dataset of 90 million prompts alongside its established keyword and backlink databases.

Semrush added AI Mode as a search engine option in Position Tracking on July 22, 2025. AI Overviews data appears across multiple tools: Position Tracking, Organic Research, Domain Overview, Keyword tools, and Sensor.

Position tracking with AI visibility overlay. Keyword and backlink intelligence. Competitor analysis across both traditional search and AI experiences.

Semrush expands an existing SEO workflow rather than replacing it. If your team already runs Semrush for SEO, adding AI visibility keeps everything in one place.

Best for: SEO teams that want AI-related visibility signals next to search and links

Ahrefs added AI-related features including AI Suggestions, AI Search Intent, AI Translations, and AI Content Helper. For AI Search visibility specifically, Ahrefs "Brand Radar" includes indexes for AI Overviews, ChatGPT, and Perplexity.

Brand Radar AI indexes were communicated as add-ons at $99/month each in a June 2025 product update.

Brand and competitor monitoring with AI visibility signals. Search demand context. Link intelligence. The core Ahrefs experience—backlinks, content explorer, keyword research—with AI layers added.

Ahrefs remains an SEO-first platform. AI visibility is additive, not central. It's useful for teams whose operating system is still SEO + content + links but who want AI signals available.

Best for: Lightweight AI visibility dashboards and reporting

Peec provides AI search analytics with visibility, position, and sentiment tracking across AI engines.

Exports, Looker Studio connector, and API options are core workflow elements. Peec is designed to feed into internal dashboards or client reporting systems rather than serve as a standalone command center.

Analytics and reporting layer. Peec works well for teams that already have their own action systems and need clean data inputs.

Best for: Organizations prioritizing enterprise-grade AI visibility analytics

Profound monitors visibility across AI answer experiences with an enterprise focus. A December 2024 product announcement added "Prompt Volumes" as a feature.

Analytics-first reporting with enterprise controls. Profound emphasizes SOC 2 Type II compliance, SSO, and premium support. This is a platform built for organizations with security requirements and structured procurement processes.

Analytics-first for enterprise programs. Teams typically use Profound to generate insights that inform execution across content, PR, and web—but execution happens elsewhere.

Best for: Larger brands and agencies that want broad AI model coverage and methodology depth

Evertune tracks across ChatGPT, AI Overviews, Gemini, Claude, and additional models. The platform distinguishes between base model responses and consumer app experiences—useful for understanding how raw model behavior differs from what users actually see.

Competitive benchmarking across AI models. "Prompt at scale" methodology. Coverage depth that supports enterprise measurement programs.

Enterprise programs with structured workflows. Evertune works for teams that need scale, repeatable measurement, and cross-model comparisons.

Best for: Prompt monitoring and AI answer tracking on a smaller budget

Otterly covers Google AI Overviews, Google AI Mode, ChatGPT, Perplexity, Gemini, and Copilot. Daily automated prompt monitoring is standard. The platform attempts to simulate neutral outputs by avoiding personalization factors.

Prompt monitoring, brand reports, and agency-friendly features including workspaces and exports.

Fast setup, ongoing monitoring. Otterly works for teams that want visibility tracking without enterprise complexity or enterprise pricing.

Best for: Prompt-led tracking with volumes, competition, and citation analysis

Promptwatch tracks across ChatGPT, Claude, Gemini, and Perplexity. Key differentiators include prompt volume tracking (not just visibility but how often prompts are used), competitor comparison, and citation analysis.

Multi-language and geo support is available. Promptwatch's December 2025 changelog shows active development on exports, new languages, and dashboards.

Prompt tracking, prompt volumes, competitor comparison, citation analysis. The platform positions GEO as a prompt portfolio—similar to how teams manage paid search query sets.

Promptwatch is backed by a $1.4M seed round, per their agency page.

Prompt portfolio management. Promptwatch fits teams that think about GEO in terms of prompt sets rather than just keyword lists.

Best for: Teams that want AI Search tracking plus guided actions and workflows

AthenaHQ focuses on tracking, content gaps, citations, and actions. The platform connects visibility data to suggested next steps.

Citation tracking, content gap identification, and action workflows. Self-serve pricing is visible on the site—validate current pricing during evaluation.

A "command center" approach. AthenaHQ ties tracking to action for mid-market to enterprise teams.

Best for: Teams looking for a packaged GEO program with analytics plus execution support

Relixir positions as end-to-end GEO—analytics plus execution support rather than pure SaaS.

GEO reporting integrated with program delivery. The positioning is structured program, not just tool.

Teams aligning marketing and content production around GEO as a discipline. Relixir works when you want a structured program, not just a dashboard.

Best for: Teams that want LLM-specific tracking and content guidance

Anvil covers major LLMs with a focus on competitive comparisons and content underperformance guidance. The platform positions as "the SEO platform for the AI era."

Competitor comparisons, content optimization guidance, LLM-native insights.

Anvil sits alongside existing SEO tooling. It's built for teams that want an LLM-native perspective without abandoning their current SEO stack.

No. GEO is additive to SEO, not a replacement.

AI engines pull from indexed content. If your content isn't findable, authoritative, and relevant in traditional search, it's unlikely to be cited in AI answers either.

The Yext research finding—86% of AI citations from brand-owned or managed sources—reinforces this. Your own properties still matter. GEO is about ensuring you appear in the AI layer on top of search, not instead of search.

At minimum:

Track these weekly. Everything else is useful context but not essential.

AI answers vary based on user history, location, and other personalization factors.

Quality tools address this by:

Ask vendors how they handle this. If they can't explain their methodology, the data may be unreliable.

It depends on your goal.

Citations drive traffic. The user can click through to your site. This is measurable and valuable.

Mentions drive awareness. The user sees your brand but may not visit. This still matters for top-of-funnel brand building.

For most marketing teams, citations are the primary metric. But if you're in a category where brand awareness is the bottleneck, mentions matter too.

The market for AI Search analytics tools is maturing fast. In 2024, most teams were still debating whether GEO mattered. In 2026, it's a board-level conversation.

Choose based on:

If you need a platform that reduces metric overload, explains why competitors show up, ships a data-driven GEO playbook that updates, and ties completed actions to outcomes—with human guidance available—HeyAmos is worth evaluating.

Start with a baseline report. See where you stand. Then decide how to act.